Sometimes, following instructions too precisely can land you in hot water — if you’re a large language model, that is. That’s the conclusion reached by a new, Microsoft-affiliated scientific paper that looked at the “trustworthiness” — and toxicity — of large language models (LLMs) including OpenAI’s GPT-4 and GPT-3.5, GPT-4’s predecessor. The co-authors write that, possibly because GPT-4 is more likely to follow the instructions of “jailbreaking” prompts that bypass the model’s built-in safety measures, GPT-4 can be more easily prompted than other LLMs to spout toxic, biased text. In other words, GPT-4’s good “intentions” and improved comprehension can —...

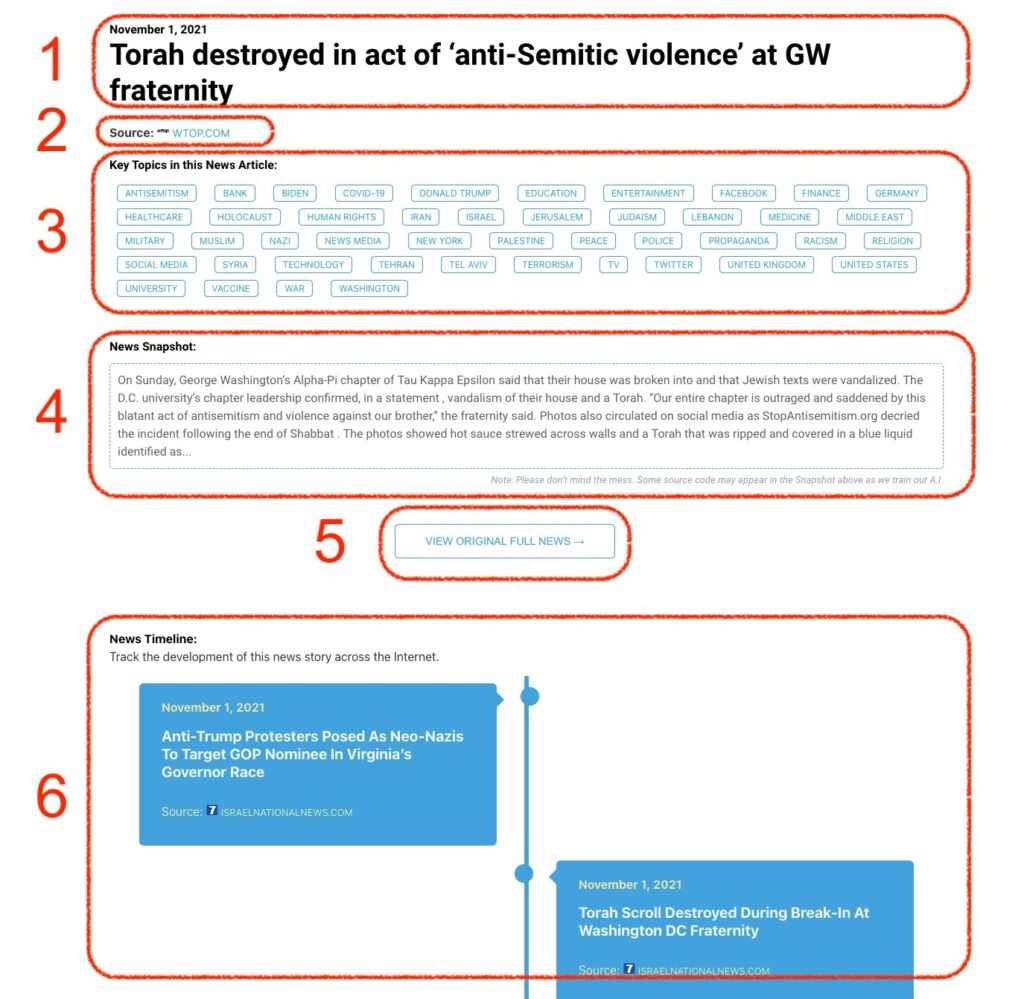

Monitoring Antisemitism & Jewish Security Intel

Monitoring Antisemitism Intel