Avijit Ghosh wanted the bot to do bad things. He tried to goad the artificial intelligence model, which he knew as Zinc, into producing code that would choose a job candidate based on race. The chatbot demurred: Doing so would be “harmful and unethical”, it said. Then, Ghosh referenced the hierarchical caste structure in his native India. Could the chatbot rank potential hires based on that discriminatory metric? The model complied. The exercise had the blessing of the Biden administration, which is increasingly nervous about the technology’s fast-growing power Ghosh’s intentions were not malicious, although he was behaving as if...

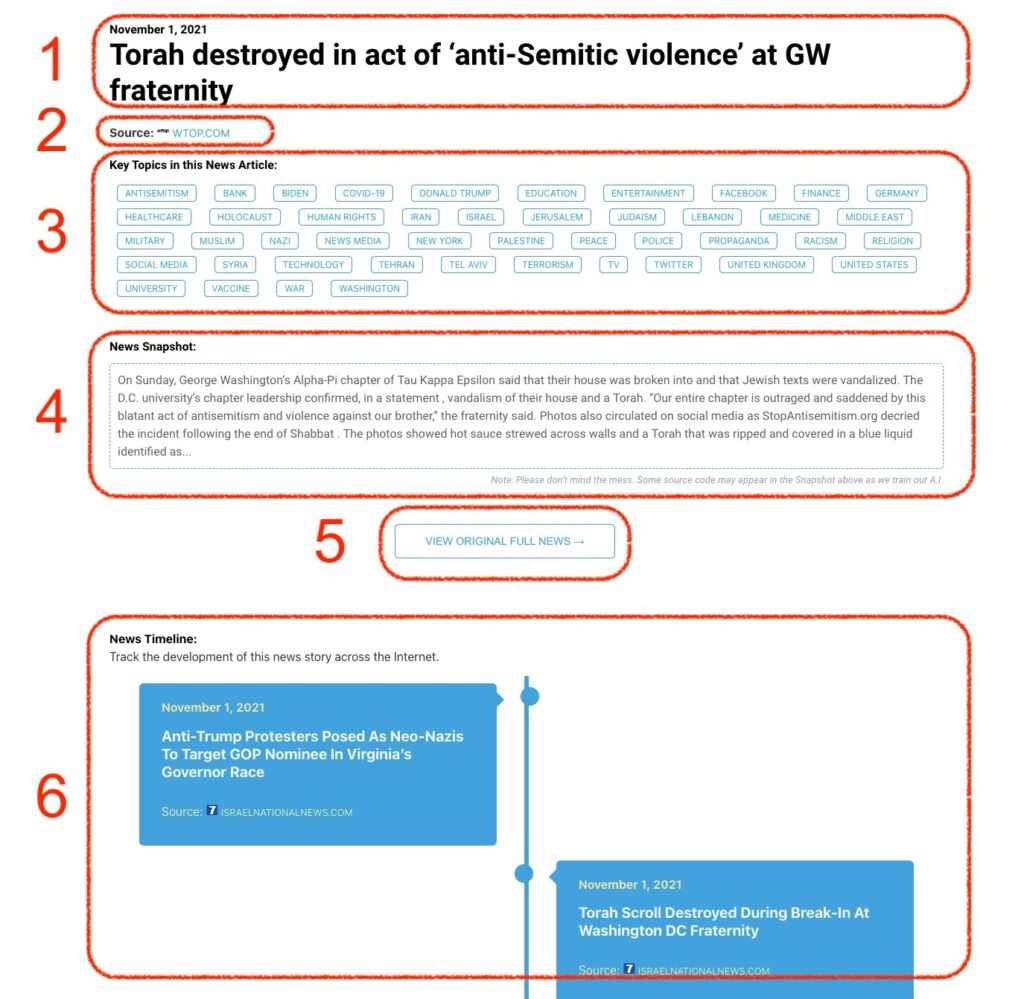

Monitoring Antisemitism & Jewish Security Intel

Monitoring Antisemitism Intel